The Harness Is the Product: AI Harness Engineering for Systems That Survive Reality

AI harness engineering is where strategy meets execution. A deep look at what an AI harness is, why teams build them badly, and how to set one up using the latest OpenAI and Claude conventions.

Most AI projects do not fail at the model. They fail at the seam where the model meets the world.

You can swap GPT-5 for Claude Opus, fine-tune on a proprietary dataset, run an evaluation suite of a thousand prompts, and still watch the system collapse the moment a real user asks a real question that does not appear in the test set. The model is not the bottleneck. The harness around it is.

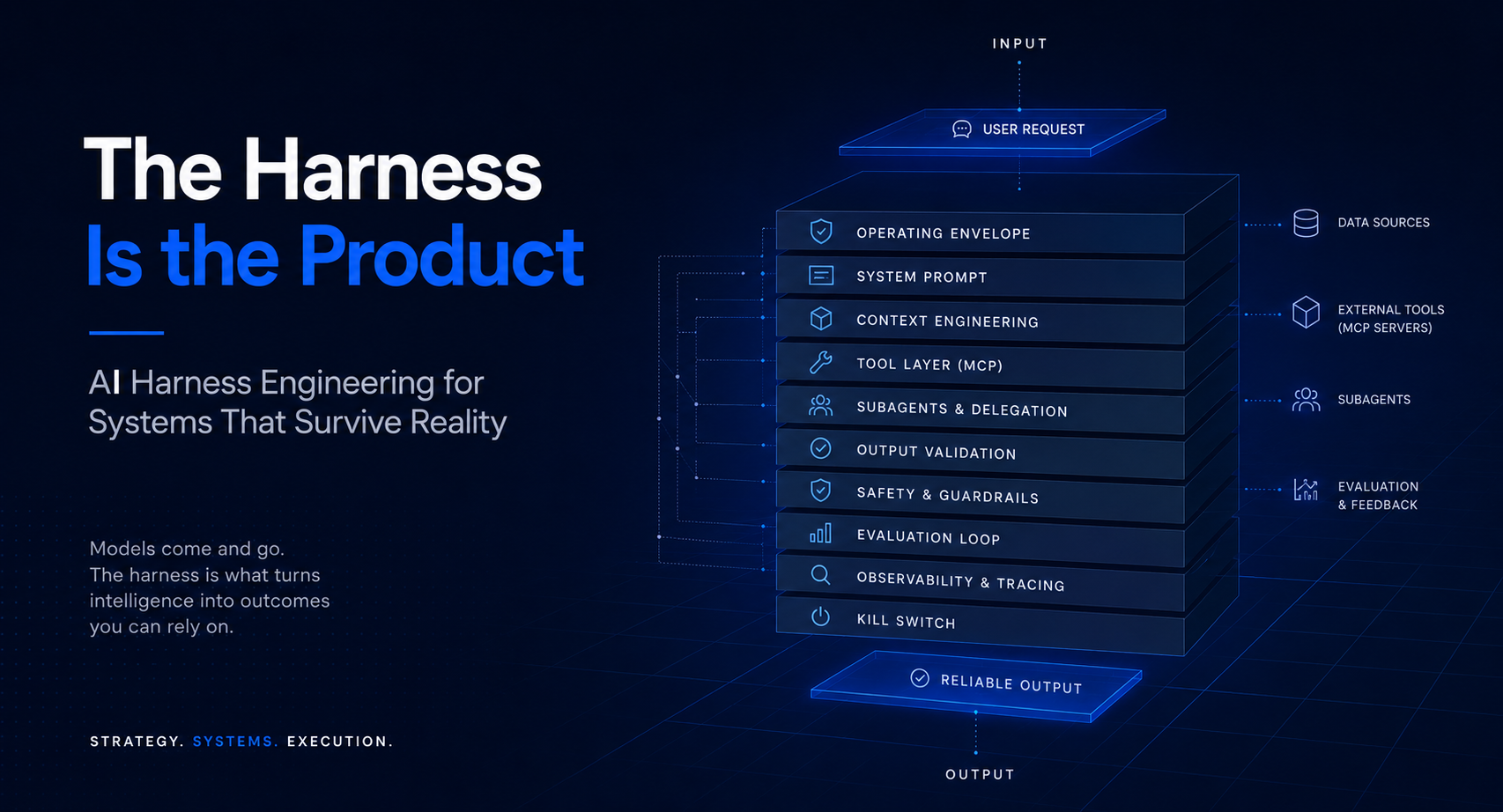

The harness is everything that wraps the model: the system prompt, the tool registry, context management, retry logic, output validators, safety layers, observability, and the evaluation loop. It is the operating envelope inside which a model becomes an agent. And in 2026, with both OpenAI and Anthropic publishing increasingly opinionated rules for how that envelope should be built, the discipline has finally earned a name. We call it AI harness engineering, and at Tasawom we treat it as the most important infrastructure decision an organisation makes after picking a foundation model.

This article does three things. It explains what an AI harness actually is, in concrete terms. It walks through the harness conventions that OpenAI and Anthropic have converged on over the past eighteen months. And it gives you a step-by-step blueprint for setting one up. If you ship anything that calls a large language model in production, this is the layer where your unit economics, your customer trust, and your roadmap velocity actually live.

Key highlights

- The harness, not the model, decides whether an AI system holds up under production load

- OpenAI and Anthropic have converged on a shared set of harness primitives: typed tools, structured outputs, context engineering, subagents, and protocol-level interoperability through MCP

- A modern harness is built in eight layers, from the operating envelope through to a kill switch

- Treating the harness as a product surface, not as plumbing, is the single highest-leverage move a team can make once a model choice is settled

What an AI harness actually is

A harness is the runtime scaffolding around a language model. The model itself is a stateless function. Tokens go in, tokens come out. Everything that turns that function into something a human can rely on (memory, tools, judgement, recovery) lives in the harness.

The simplest possible harness has three parts. A system prompt that tells the model who it is and what it is allowed to do. An input pipe that feeds the user's request. An output pipe that returns the answer. The simplest possible harness is also the one that fails first.

A production harness has more layers. The system prompt is generated dynamically based on user state, role, and the tools available right now. A tool layer lets the model call APIs, query databases, or invoke other agents. A context manager decides what stays in the window and what gets summarised, retrieved, or pruned. An output validator checks the response against a schema before it ever reaches the user. A retry layer handles partial failures. A safety layer blocks unsafe outputs. An evaluation layer scores each response. An observability layer captures every trace for later analysis.

Each of those layers used to be ad-hoc. Engineers wired them together with whatever framework felt least painful that month. The result was harness debt: the accumulating tax of every undocumented decision, every brittle string concatenation, every silent retry that masked a real bug.

The harness rules published by OpenAI and Anthropic over the past year exist because both companies watched their customers drown in this debt. The rules are an attempt to standardise the seams.

Why most teams build harnesses badly

Three failure modes recur in teams that build AI systems without a deliberate harness strategy.

The first is prompt engineering as a cottage industry. A senior engineer writes a long, careful system prompt that works beautifully for the demo. Six months later the prompt is fourteen thousand tokens long, nobody on the team understands every clause, and modifying it is the highest-risk change anyone can make. The team has confused a prompt with a contract. A contract is versioned, tested, and bounded. A prompt that has accreted instructions like rings on a tree is none of those things.

The second is the demo-to-production gap. The system works in a controlled environment with clean inputs, friendly users, and a narrow scope. Production introduces three things the harness was never designed for: adversarial inputs, drift in the underlying tools, and tail-distribution requests. Each of these requires a specific harness layer that was not in the original design. Teams patch around them with retries, guardrails bolted on after the fact, and increasingly desperate prompt amendments.

The third is the orchestration tax. Once a single agent is not enough, teams reach for orchestration frameworks. Chains, graphs, agents-of-agents. The frameworks promise composability and deliver, instead, a maze of indirection in which a single user request might spawn forty model calls, each with its own latency, error budget, and failure mode. The team has now built a distributed system on top of a stochastic primitive, without applying any of the operational discipline a distributed system requires.

The deeper diagnosis is that most teams treat the model as the product and the harness as plumbing. The economics flip the moment the model becomes a commodity. Once two or three foundation models are roughly interchangeable for your use case (and we are well past that point), the harness is what differentiates you. The harness is the product.

The 2026 harness rules from OpenAI and Anthropic

Both labs have shipped, in the past eighteen months, increasingly explicit guidance for how production harnesses should be structured. The conventions are not identical, but the convergence is striking. Six primitives now appear in both stacks.

Primitive one: tools as first-class citizens

Tool use is no longer a clever trick. It is the spine of any agentic system. Both OpenAI's tool calling API and Anthropic's tool use API treat tools as typed contracts. A tool has a name, a JSON Schema definition of its inputs, and a clearly bounded effect. The model's job is to decide when to call a tool, with what arguments, and what to do with the result.

The 2026 rule, in both stacks, is that tool definitions belong out of the system prompt and into a structured registry. Embedding tool descriptions in prose was the old way. The new way is a typed schema with semantic descriptions, validated at registration time, with input and output examples that drive both the model and your evaluation suite.

Primitive two: structured outputs by default

Free-form text outputs are the source of a disproportionate share of harness pain. OpenAI's Structured Outputs and Anthropic's tool-shaped outputs both let you constrain the model's response to a JSON Schema. The rule has become simple. If the output is going to be parsed by code, the output must be schema-constrained. If it is going to be read by a human, it can be free-form, but it should still pass through a validator before display.

Primitive three: context engineering as a discipline

Both labs now publish guidance on context engineering, the practice of deciding what goes into the model's context window and what stays out. The shared principles are: keep the window lean, prefer retrieval over inclusion, summarise long histories with the model itself, and use compaction or context editing when a session exceeds a budget. Anthropic's Claude Agent SDK ships explicit context-editing primitives. OpenAI's Responses API ships managed conversation state with similar semantics. The era of dumping every message into one ever-growing context blob is over, and teams that do not adapt will pay for it in tokens and accuracy.

Primitive four: subagents and delegation

A single model call cannot do everything well. Both stacks now treat subagents as a first-class pattern. The harness orchestrator defines a primary agent that plans and dispatches, and one or more specialist subagents that execute. Subagents typically run in their own context window with their own tool subset, returning a summary to the parent. The Anthropic guidance is especially clear here: subagents are how you keep parent contexts small and parent agents focused. They are also how you isolate failure. A subagent that fails returns a structured error, not a corrupted parent context.

Primitive five: protocol-level interoperability via MCP

The Model Context Protocol, originated by Anthropic and now adopted by OpenAI's tooling and several open-source frameworks, standardises how agents discover and call external systems. An MCP server exposes resources, tools, and prompts over a defined transport. Any MCP-compatible host can connect. The strategic implication is enormous. Integrations are no longer per-vendor wiring jobs, they are protocol implementations. A team that builds against MCP today gets future portability for free, and the cost of writing tools that way is no higher than the cost of writing bespoke integrations.

Primitive six: reasoning and thinking modes

Both OpenAI and Anthropic now expose explicit reasoning modes at the API level. Extended thinking, deliberation, chain-of-thought controls, however the specific provider chooses to label it. The harness rule is to use them deliberately. Reasoning mode is expensive in both latency and tokens. It belongs in tasks where the model genuinely needs to plan or verify, not on every call. The harness should decide when to invoke it based on task type, not let the model invoke it everywhere.

These six primitives are the contract layer of the modern harness. A team that understands and uses them is operating at a different altitude than a team still concatenating strings into a prompt.

How to set up a harness, step by step

The following is the structure we use at Tasawom when designing harnesses for client systems. It scales from a single-purpose internal tool to a multi-agent customer-facing platform. Each step is a layer. Skipping a layer is technical debt.

Step one: define the operating envelope

Before any code is written, the harness team defines, in plain language, what the agent is allowed to do, what inputs it is designed to handle, what outputs it is required to produce, and what failure looks like. This is the operating envelope. It is the document that every other artefact in the harness derives from: the system prompt, the tool registry, the evaluation set, the safety layer.

A useful operating envelope answers six questions. Who is the user. What problem is the agent solving. What is in scope. What is out of scope. What does success look like. What is the worst outcome we are willing to tolerate. The last question is the most important. If you cannot articulate the worst tolerable failure, you cannot design guardrails for it.

Step two: choose the model substrate

Pick the smallest model that can perform the task at acceptable quality. The cost of a too-small model is task failure. The cost of a too-large model is ten times the inference bill and twice the latency. Run your evaluation set across at least two models and one provider boundary before committing.

The 2026 rule is that no harness should hardcode a single model. The substrate is a configuration, not a constant. A well-designed harness lets you swap Claude for GPT, or vice versa, with a configuration change and a re-run of the evaluation suite. If your code path knows which provider it is talking to, you have built a coupling that will hurt you within twelve months.

Step three: design the system prompt as a runtime contract

The system prompt is not a freeform note to the model. It is a contract. Treat it like one. Version it. Test it. Document each clause. Keep it under a budget. We typically aim for fewer than three thousand tokens for the static portion, with dynamic context appended at runtime.

A modern system prompt has four sections. Identity (who the agent is). Capabilities (what tools and resources it has access to). Constraints (what it must not do). Output format (how it should respond). Anything that does not fit one of those four sections probably does not belong in the system prompt.

Pro Tip: When the system prompt grows past three thousand tokens, the right move is almost never to keep adding instructions. It is to factor a portion out into a tool, a subagent, or a retrieval-augmented context block. Bloated prompts are a symptom that the harness is doing the model's job for it.

Step four: wire tools through MCP where possible

Define every external capability the agent needs as a tool. Use JSON Schema for the input and output contracts. Where the capability is exposed by an existing MCP server, prefer that path. You get observability, retries, and discovery for free. Where you build your own, build it as an MCP server from day one even if you are the only consumer. The protocol overhead is negligible and the optionality is immense.

Each tool needs three artefacts. The schema. A semantic description that helps the model decide when to use it. At least three input/output examples. The examples drive both prompt-time guidance and evaluation. A tool without examples will be misused.

There is a second-order benefit to MCP that teams often miss. When your tools live behind a protocol, you can audit them independently, swap implementations, and let multiple agents share the same tool registry. The wiring becomes a product surface in its own right, and your engineers stop rewriting the same integration five times for five different agents.

Step five: engineer context management

Decide, at design time, how the context window will be managed across the lifecycle of a session. The relevant levers are inclusion (what gets put in the window), retrieval (what gets fetched on demand), summarisation (how long histories get compacted), and editing (how the harness rewrites context when a budget is approached).

Both Anthropic and OpenAI now expose primitives for each of these. Use them. The team that builds context management on top of raw string concatenation in 2026 is choosing to debug the same class of bug for the next two years. Context engineering is where the difference between an amateur agent and a production agent becomes most visible. An amateur agent forgets what it was doing five turns ago. A production agent remembers exactly the right things and discards the rest.

Step six: build the evaluation loop

An evaluation loop is not a one-time test suite. It is a continuous process that runs every time the system prompt, a tool definition, or a model version changes. The loop has three components. A labelled dataset of representative inputs. A scoring function that measures correctness on each input. An automation layer that runs the suite on every change and gates deploys.

A good evaluation set has three tiers. The canonical examples, which are the cases the agent must always handle. The tail, which is adversarial inputs, edge cases, and ambiguous requests. The regression set, which is cases that previously failed and have since been fixed. Each tier earns a different threshold for ship-readiness.

Pro Tip: The single highest-leverage harness investment most teams under-make is the evaluation loop. A team with a thousand-input evaluation suite running on every change will outpace a team with a better model and no eval suite. The eval set is the harness's nervous system.

The most common mistake here is treating evaluation as something that happens at the end. By then it is too late. Evaluation should drive design, not validate it. When you write a new tool, the first artefact is not the implementation, it is the eval cases that define when the tool should be called and what good looks like when it is.

Step seven: add observability and feedback

Every model call should produce a trace. Input, system prompt, tool calls, intermediate outputs, final response, latency, token cost. The traces feed two systems. The first is operational observability, with alerts when latency or error rates breach thresholds. The second is the evaluation flywheel. Traces from production become the source of new test cases when something interesting happens.

The 2026 rule is that traces are infrastructure, not nice-to-have. If you cannot reproduce a production failure from a trace, the harness is incomplete. The good news is that both major providers now ship trace export out of the box, and the open-source observability ecosystem (LangSmith, Langfuse, Arize, Phoenix, others) has matured to the point where rolling your own is no longer the default answer.

Step eight: ship a kill switch

Every harness needs a way to disable, downgrade, or redirect itself without a deploy. This is the kill switch. It can be a feature flag. A config file. A runtime check against a remote configuration service. It does not matter what it is, only that it exists. The day you need a kill switch and do not have one is a day you will remember.

The kill switch should support three actions, not just one. Full disable, where the harness stops accepting requests and routes users to a fallback. Downgrade, where the harness switches to a cheaper or simpler model variant. Redirect, where specific cohorts or specific requests are routed to a different harness version. Most teams ship full disable and stop there. The mature versions of all three are what separate a harness you can operate from a harness you can only pray over.

The Tasawom view: the harness is the product

The reason we treat AI harness engineering as a strategic discipline at Tasawom is not philosophical. It is economic. In a world where foundation models are commoditising at speed, the harness is the only durable surface. A team that ships a thoughtful harness on a commodity model will out-execute a team with a marginally better model and a careless harness, every time, in every market we have observed.

Modular architecture, headless integration patterns, and recursive feedback loops apply with double force to AI harnesses. A modular harness lets you swap models without rewrites. A headless harness lets you serve the same agent through web, API, voice, or embedded contexts without duplicating logic. A recursive feedback loop, where production traces become tomorrow's evaluation set, turns the harness into a system that improves itself with use rather than degrades.

The strategic move for the next eighteen months is not to chase the latest model release. It is to invest in the harness layer until your team can swap models in an afternoon and ship a new agent in a week. That is the operational velocity that separates teams shipping AI demos from teams shipping AI products. And it is the velocity that compounds. Every harness improvement makes the next agent cheaper to ship. Every cheap agent shipped earns the data that makes the harness better.

This is what we mean when we say the harness is the product. The model is a substrate. The agent is an artefact. The harness is the part that survives the next model release, the next provider change, the next pivot in your business. It is where your moat actually lives.

Strategic takeaways

-

Decouple the model from the harness. Treat the model as configuration. Run your evaluation suite across at least two providers before committing. The team that hardcodes a single model is choosing to be held hostage by it.

-

Adopt MCP early. Build tools as MCP servers from day one, even when you are the only consumer. The protocol is the seam where the next decade of AI integration will happen, and the cost of adopting it now is zero compared to retrofitting later.

-

Treat the system prompt as a contract. Version it, test it, budget its tokens. When it grows past three thousand tokens, refactor it into tools, subagents, or retrieval. Do not keep accreting instructions.

-

Build the evaluation loop before you need it. A small but representative eval set, run on every change, is the single highest-leverage investment a harness team can make. It is the difference between a system that improves and a system that drifts.

-

Ship a kill switch with three modes. Full disable, downgrade, and redirect. Build it on day one, not on the day of your first incident.

The harness is where AI strategy meets execution. It is also where most teams underinvest. The teams that get it right do not just ship better AI products. They ship them faster, change direction more cheaply, and recover from failures in hours instead of quarters.